AI code review is transforming software development, but where does your source code go when AI analyzes it?

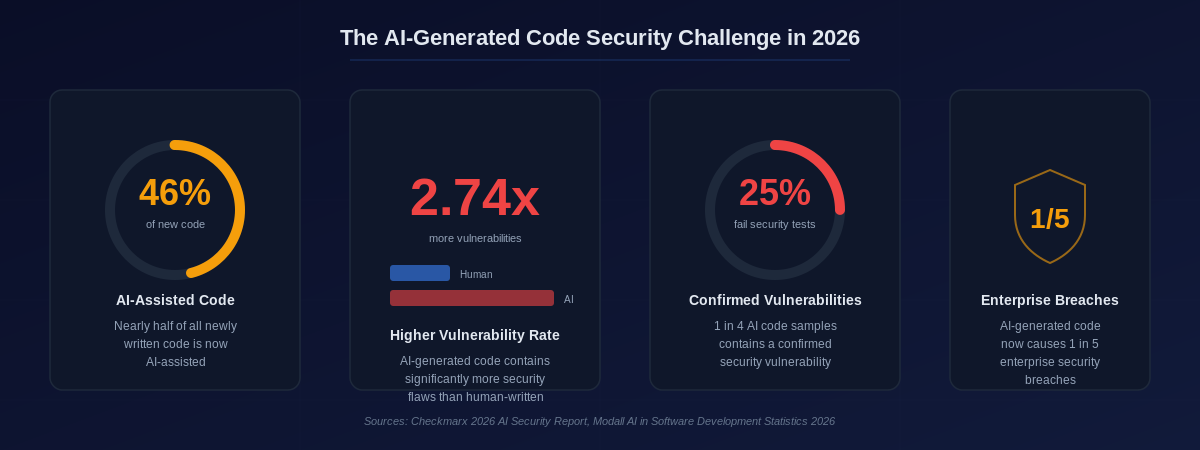

The adoption of AI-powered code review tools has exploded in 2026. Nearly half of all newly written code is now AI-assisted, and development teams across every industry are integrating large language models into their pull request workflows. The productivity gains are undeniable: faster reviews, fewer bugs reaching production, and automated detection of security vulnerabilities before they ever get merged.

But for enterprises operating in regulated industries — finance, defense, healthcare, government, and critical infrastructure — a fundamental question remains unanswered by most AI code review solutions: where does your proprietary source code go when it's sent to an AI model for analysis?

The answer, for the majority of cloud-based AI review tools, is simple and concerning: it leaves your network.

The Hidden Cost of Cloud-Based AI Code Review

Cloud-based AI code review tools have made powerful technology accessible. Services like GitHub Copilot, CodeRabbit, and other SaaS platforms offer impressive capabilities out of the box. For open-source projects and startups without strict compliance requirements, they're often an excellent choice.

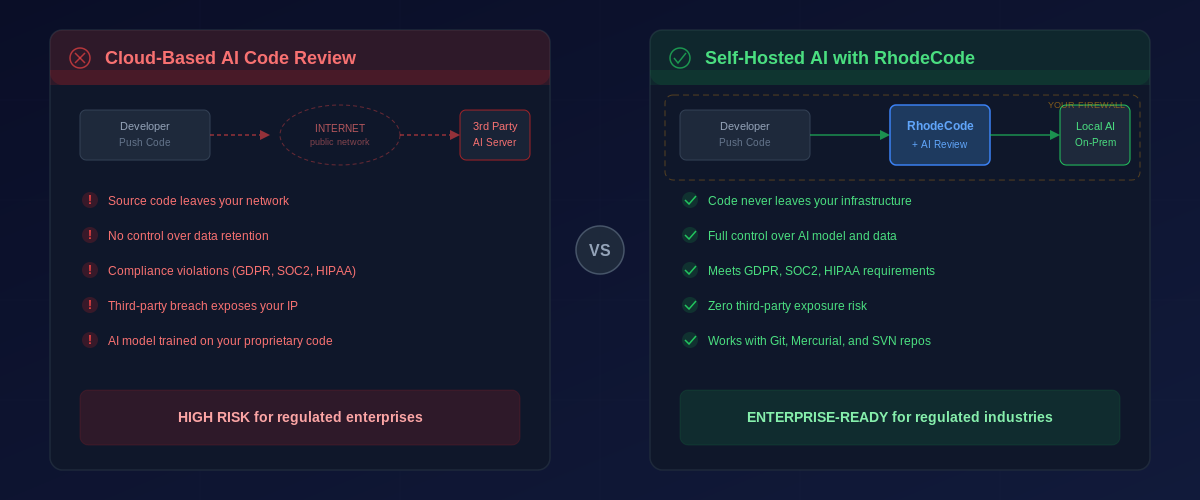

However, for enterprises with regulatory obligations, the architecture of these tools introduces significant risk. When a developer opens a pull request and a cloud-based AI tool analyzes it, the following happens:

Your source code — including business logic, algorithms, API structures, database schemas, and potentially embedded secrets — is transmitted over the internet to a third-party server. That server processes the code using an AI model that may retain data for training purposes or logging. The analysis results travel back across the public network to your repository.

At every step, your intellectual property is exposed to risks that fall outside your control.

Interesting Fact: According to the Checkmarx 2026 AI Security Report, AI-generated code now causes 1 in 5 enterprise security breaches, making the question of where and how AI interacts with your codebase more critical than ever.

The Scale of AI-Generated Code Vulnerabilities in 2026

Before discussing the self-hosted solution, it's worth understanding why AI code review has become so essential — and simultaneously so risky.

The numbers paint a stark picture. Research from 2026 shows that while AI dramatically accelerates development velocity, it introduces new categories of risk that traditional review processes weren't designed to catch.

AI-generated code contains 2.74 times more vulnerabilities than human-written code. One in four AI-generated code samples contains a confirmed security vulnerability. And with nearly half of all new code being AI-assisted, organizations face an unprecedented volume of potentially insecure code entering their repositories every day.

This creates a paradox: you need AI to review the growing volume of AI-generated code, but the most accessible AI review tools require you to send that code to external servers. For enterprises bound by GDPR, SOC 2, HIPAA, FedRAMP, or internal security policies, this is often a non-starter.

Cloud-Based vs. Self-Hosted AI Code Review: A Critical Comparison

The distinction between cloud-based and self-hosted AI code review is not merely a deployment preference. It represents a fundamental architectural decision that affects compliance posture, data sovereignty, and long-term risk exposure.

With cloud-based tools, your source code must traverse the public internet and reside, even temporarily, on infrastructure you don't control. You have limited visibility into how the AI provider processes, stores, or potentially trains on your data. And when a third-party AI provider experiences a breach — as has happened with increasing frequency — your proprietary code could be among the exposed assets.

Self-hosted AI code review fundamentally changes this equation. Your code never leaves your network perimeter. You control the AI model, the data pipeline, and the retention policies. Compliance audits become dramatically simpler because you can demonstrate complete chain-of-custody for every line of code that passes through your review process.

For organizations managing sensitive intellectual property — whether that's proprietary trading algorithms, defense system firmware, medical device software, or financial platform logic — the self-hosted approach isn't just preferable. It's the only architecture that meets their regulatory requirements.

| Consideration | Cloud-Based AI Review | Self-Hosted AI Review |

|---|---|---|

| Data Residency | Code leaves your network | Code stays on your infrastructure |

| Data Retention | Controlled by third party | Controlled by your team |

| Compliance | Complex; depends on vendor | Simplified; you own the audit trail |

| Model Selection | Limited to vendor's model | Any model: Claude, GPT, Gemini, local LLMs |

| Breach Exposure | Third-party breach exposes your IP | Zero external attack surface |

| VCS Support | Typically Git-only | RhodeCode: Git, Mercurial, and SVN |

| Network Dependency | Requires internet access | Works air-gapped or offline |

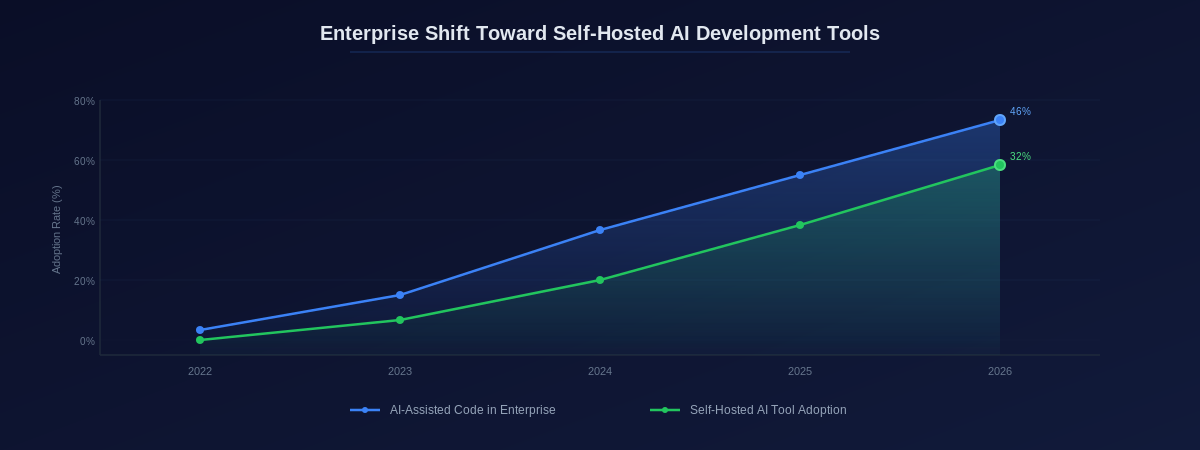

The Enterprise Shift Toward Self-Hosted AI Development Tools

The trend toward self-hosted AI tools is not unique to code review. Across the software development lifecycle, enterprises are increasingly moving AI capabilities behind the firewall. Self-hosted coding assistants, local LLM deployments, and on-premises AI sandboxes are becoming standard components of the enterprise development stack.

The driving forces behind this shift are consistent: data sovereignty, regulatory compliance, and the need to maintain control over sensitive assets. Companies are moving from managed sandbox services to self-hosted AI environments to keep personally identifiable information and proprietary source code within their security perimeter, simplifying compliance audits and maintaining data sovereignty.

This trend creates a natural alignment with platforms like RhodeCode, which have been built from the ground up for behind-the-firewall deployment and enterprise-grade access control.

How RhodeCode Brings AI Code Review Behind the Firewall

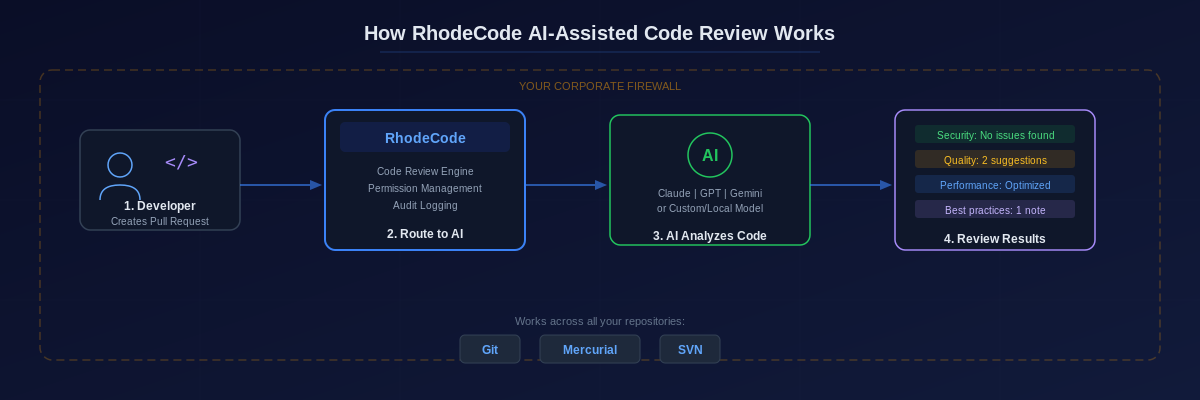

RhodeCode has integrated AI-assisted code review directly into its self-hosted platform, giving enterprise teams the ability to connect to ChatGPT, Gemini, Claude, or a custom AI model and receive automatic analysis of pull requests — all without your source code ever leaving your infrastructure.

Here's how it works in practice. A developer creates a pull request in RhodeCode. The platform routes the code diff to your configured AI model — whether that's a cloud API endpoint you've approved through your security review process, or a locally-hosted model running entirely on your own hardware. The AI analyzes the code for security vulnerabilities, quality issues, performance concerns, and best practice violations. The results appear directly in the RhodeCode pull request interface, alongside human reviewer comments.

The key architectural advantage is that RhodeCode acts as the control plane. You decide which AI model processes your code. You configure which repositories and branches trigger AI review. You control the data flow from end to end. And because RhodeCode is installed on your infrastructure, the entire pipeline operates within your security perimeter.

Unique Advantages of RhodeCode's Approach

Multi-VCS AI Review. RhodeCode is the only platform that brings AI-assisted code review to Git, Mercurial, and Subversion repositories through a single, unified interface. For enterprises that still rely on SVN for legacy systems or Mercurial for specific workflows, this means every repository benefits from AI analysis — not just the ones that happen to use Git.

Enterprise Permission Integration. RhodeCode's advanced permission system extends to AI review. You can configure which teams, repositories, or branches have AI review enabled, and which AI model each team uses. Teams working on classified projects might use an air-gapped local model, while less sensitive repositories might connect to an approved cloud AI endpoint.

Audit Trail and Compliance. Every AI review interaction is logged within RhodeCode's audit system. For SOC 2, HIPAA, or internal compliance audits, you have a complete record of when AI analyzed code, which model was used, and what recommendations were generated — all stored on your own infrastructure.

Secret Scanning Integration. RhodeCode 5.4.0 introduced the ability for admins to scan repositories for potentially sensitive information like passwords and secret keys. Combined with AI-assisted review, this creates a dual-layer defense: AI catches logic-level vulnerabilities while the secret scanner identifies accidentally committed credentials — a particularly common problem in AI-generated code.

Practical Configuration: Connecting AI Models to Your RhodeCode Instance

RhodeCode's AI integration supports multiple deployment models, allowing enterprises to match their AI configuration to their specific security requirements.

Option 1: Approved Cloud AI Endpoint. For organizations that have completed security review of specific cloud AI providers, RhodeCode can connect to Claude, GPT, or Gemini APIs. Your code is sent directly from your RhodeCode instance to the AI provider — never through a third-party middleware layer. You maintain control over API keys, rate limits, and which repositories are eligible for cloud AI review.

Option 2: Self-Hosted Open-Source Model. For maximum data sovereignty, organizations can deploy open-source models like Llama, Mistral, or CodeLlama on their own hardware and connect RhodeCode to the local inference endpoint. In this configuration, code never leaves your network at all — even the AI model runs on infrastructure you own and control.

Option 3: Hybrid Configuration. Many enterprises adopt a tiered approach. Public or open-source repositories might use cloud AI endpoints for convenience, while repositories containing proprietary business logic or regulated data exclusively use locally-hosted models. RhodeCode's per-repository configuration makes this straightforward to implement and enforce.

Why This Matters More in 2026 Than Ever Before

The convergence of three trends makes self-hosted AI code review not just sensible but essential for enterprises.

First, the volume of AI-generated code has reached a tipping point. With nearly half of new code being AI-assisted, the attack surface for AI-introduced vulnerabilities has grown proportionally. Organizations need AI to review AI — but they need it on their terms.

Second, regulatory frameworks are catching up to AI. Data protection regulations are increasingly explicit about where and how AI processes sensitive data. Organizations that can demonstrate their AI code review pipeline operates entirely within their infrastructure have a dramatically stronger compliance posture.

Third, the cost of getting it wrong has never been higher. With AI-generated code now responsible for a significant share of enterprise security breaches, the security of the code review process itself has become a board-level concern. The question is no longer whether to adopt AI code review, but whether your AI review architecture matches the sensitivity of your codebase.

Frequently Asked Questions

Can RhodeCode AI code review work completely offline?

Yes. By connecting RhodeCode to a self-hosted AI model running on your local infrastructure, the entire AI code review pipeline operates without any internet connectivity. This is essential for air-gapped environments common in defense, government, and critical infrastructure sectors.

Which AI models does RhodeCode support for code review?

RhodeCode supports any AI model accessible via an API endpoint, including Claude, ChatGPT, Gemini, and self-hosted open-source models like Llama and Mistral. You can configure different models for different repositories based on your security requirements.

Does RhodeCode's AI review work with Subversion and Mercurial, or only Git?

RhodeCode provides AI-assisted code review across all three version control systems it supports: Git, Mercurial, and Subversion. This is unique in the market — most AI code review tools only support Git repositories.

How does self-hosted AI code review affect compliance audits?

Self-hosted AI code review significantly simplifies compliance. Because all data processing occurs within your infrastructure, you can demonstrate complete data chain-of-custody. RhodeCode's audit logging captures every AI interaction, providing the documentation that SOC 2, HIPAA, GDPR, and similar frameworks require.

What's the performance impact of running AI code review on-premises?

Performance depends on your AI model and hardware configuration. Cloud AI endpoints (Claude, GPT, Gemini) provide fast response times without local hardware investment. Self-hosted models require GPU infrastructure but offer zero-latency network connections. Most enterprises find that AI review adds seconds, not minutes, to their pull request workflow.

Conclusion: The Future of AI Code Review is Behind Your Firewall

The adoption of AI-assisted code review is no longer optional for competitive software organizations. The productivity and quality gains are too substantial to ignore. But the architecture of how AI interacts with your source code matters as much as the AI's capabilities.

For enterprises that take code security, data sovereignty, and regulatory compliance seriously, self-hosted AI code review isn't a luxury — it's a requirement. Platforms like RhodeCode make this possible by bringing enterprise-grade AI analysis directly into your existing on-premises development workflow, supporting every major version control system, and keeping your most valuable intellectual property exactly where it belongs: behind your firewall.

Ready to bring AI code review behind your firewall? Explore how RhodeCode can modernize your code review workflow with self-hosted AI integration across Git, Mercurial, and SVN repositories. Try RhodeCode for free and see the difference self-hosted makes.